We have worked with many clients to help reduce or control their AWS cloud costs. Across our engagements, we have seen 5 recurring challenges to cloud cost control:

- Oversizing

- Software Inefficiency

- Inelasticity

- Sub-Optimal Architecture

- Forecasting Complexity

For the purposes of this blog we will exclude the relatively trivial challenge of simply switching capacity off when it's not being used.

1. Oversizing

![]() This is the most common reason for overspend and the simplest to solve. There are multiple contributions that lead to this, and they are often rooted in the design process:

This is the most common reason for overspend and the simplest to solve. There are multiple contributions that lead to this, and they are often rooted in the design process:

- Capacity added even when there is sufficient headroom

- Inaccurate sizing due to weak performance testing methodologies

- Inaccurate demand forecasts

- Excess capacity put in place to compensate for software bottlenecks

The latter is a particular problem when this 'temporary fix' becomes a permanent solution!

2. Software Efficiency

Software efficiency is one of Capacitas’ 7 Pillars of Performance. Efficiency is defined as the amount of compute resource required per transaction and is a critical lever in the control of your cloud costs. This is particularly pertinent for high-volume systems.

Software efficiency is one of Capacitas’ 7 Pillars of Performance. Efficiency is defined as the amount of compute resource required per transaction and is a critical lever in the control of your cloud costs. This is particularly pertinent for high-volume systems.

Where does software inefficiency stem from?

- Not measuring efficiency

- Not knowing what 'good' efficiency looks like

- Not having efficiency targets (non-functional requirements)

- Other priorities on developers' time

How important is this? In one client engagement, we identified a series of software optimisations and worked with their developers to implement. These optimisations reduced the IT service opex costs from $3.3M to $0.3M per year.

3. Inelasticity

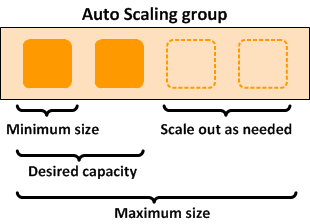

AWS autoscaling is a great way to control cloud cost by adjusting capacity to meet changing demand.

However, applications which are inefficient and/or require long warm up times do not auto scale quickly enough. Applications which tend to be inelastic include databases, caches and inefficient applications.

In one example, a customer had an embedded practice to autoscale their systems at 50% CPU utilization. For one particular application, this resulted in $1M per year in unnecessary spend.

4. Sub-Optimal Architecture

Choosing a sub-optimal architecture for your workload will lead to higher cloud costs. We've seen this occur when teams are under time pressure to simply 'lift and shift' to the cloud.

The best way to avoid this is to measure and model your workload and choose an appropriate architecture to service that workload. Workload modelling involves characterisation the workload over multiple dimensions, for example:

- Persistent vs transient

- Synchronous vs asynchronous

- Transaction size

- Read vs write workload

- Processor service time

- Disk service demand

- Network service demand

- Disk storage demand

- Memory service demand

5. Forecasting Complexity

![]() In AWS, budget forecasting must account for 3 different demand drivers:

In AWS, budget forecasting must account for 3 different demand drivers:

- Introduction of new services

- Changes in business demand for existing services

- Changes in software efficiency on existing services - driven by software releases

This forecasting is complex - it requires process, skills and the right data. Plus, the supply-side modelling is also complex with different cost models associated with each AWS offering.

Summary

Once these challenges are solved, you'll have a right-sized, efficient, optimised and elastic cloud in place. However, the cloud cost optimization process needs to continue throughout the production lifecycle as your demand and IT services evolve.

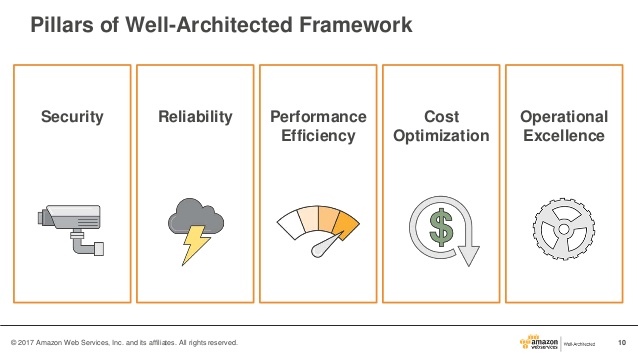

AWS also highlight these areas to focus on and provide a lot of great guidance on cost-optimisation in their Well-Architected Framework.